Agentic AI and AI Agents Explained

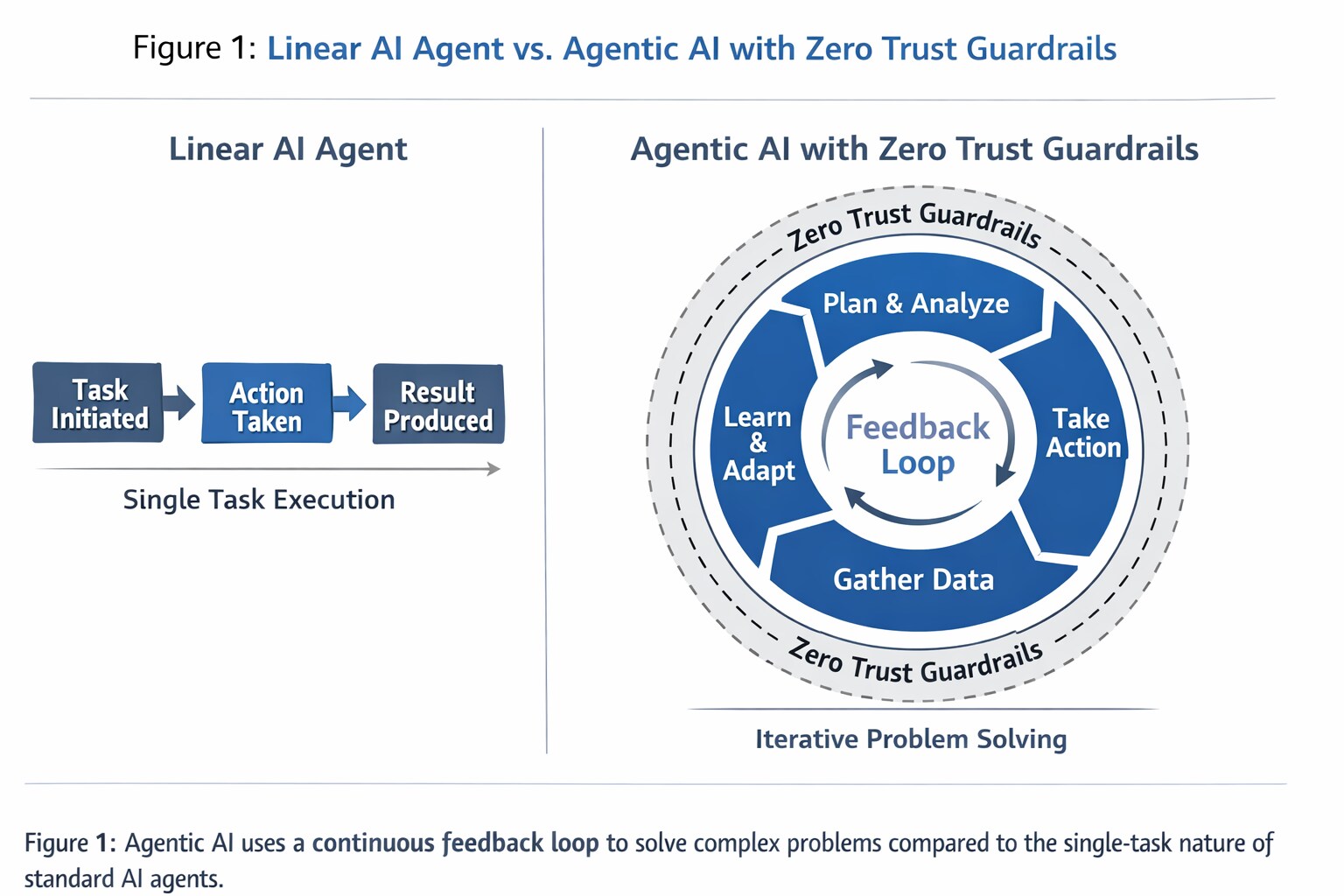

Understanding the distinction between these two concepts is vital for security leaders navigating the current "AI era." An AI agent is a specialized digital assistant. It is purpose-built to handle a singular function, such as parsing email headers for phishing indicators or rotating encryption keys on a set schedule. These agents are highly efficient but lack situational awareness; they cannot "see" the broader security objective beyond their immediate task.

Agentic AI represents a leap from execution to agency. Instead of waiting for a prompt to perform a task, an agentic system is given a goal, such as "neutralize this ransomware outbreak", and independently determines which sequence of actions will achieve it. It may dispatch one agent to isolate infected hosts, another to audit access logs, and a third to initiate backup recovery, all while continuously monitoring the environment for new lateral movement.

This shift is significant because it moves the human analyst from a "pilot" role (directing every move) to a "commander" role (setting objectives and governing the AI's autonomous decisions). In an era where Unit 42 has observed attack speeds increasing 4x in a single year, the ability of agentic AI to react at machine speed across fragmented identity and cloud surfaces is no longer a luxury but a defensive necessity.

Structural Differences: From Task-Based to Goal-Oriented

The primary differentiator between these two technologies lies in the underlying architecture of decision-making. Standard AI agents rely on chain-of-thought prompting or static playbooks to move from point A to point B. Agentic AI employs a recursive reasoning loop that allows it to evaluate its own progress and pivot if a specific tactic fails.

Autonomy and Decision Logic

Standard agents operate on reactive logic. They require a specific input to produce a specific output. If the input deviates from the expected parameters, the agent typically fails or requires human troubleshooting. Agentic AI uses proactive logic. It assesses the current state of a system against the desired "gold" state and independently selects the tools required to bridge that gap.

The Orchestration Layer

Agentic AI functions as a system of systems. It includes an orchestration layer that acts as a central nervous system. This layer manages a "swarm" of specialized agents, assigning them tasks based on their specific strengths. For example, the orchestrator might task a natural language agent with interpreting a new CISA directive while simultaneously tasking a technical agent with scanning the network for the newly identified vulnerabilities.

Persistence and Long-Term Memory

Unlike traditional agents that often operate in a stateless manner, forgetting the context once a task is complete, agentic systems maintain long-term memory. They record which strategies were successful in past incidents and apply those lessons to future planning. This persistence allows the system to build a contextual map of an organization’s unique environment, including "normal" behavior patterns and critical asset locations.

The Evolving Role of AI in the Security Operations Center (SOC)

The transition to agentic systems is redefining the maturity model of the modern SOC. Security leaders must understand where their current capabilities sit on this spectrum to close the gap against automated adversaries effectively.

Level 1: Point Automation and Scripting

Most organizations begin here. This level involves using basic scripts or SOAR playbooks to automate repetitive actions. These are not agents in the true sense, as they lack any internal reasoning capability. They follow a strict "if-this-then-that" logic.

Level 2: Specialized AI Agents for Triage

At this stage, organizations deploy LLM-powered agents to assist analysts. These agents can summarize alerts, explain complex code snippets, or suggest remediation steps. However, they still require a human to trigger every action and verify the output before moving to the next step in the incident lifecycle.

Level 3: Full Agentic Orchestration for Incident Response

This represents the emerging frontier of cyber defense. In mature implementations, agentic systems handle much of the detection and investigation pipeline autonomously, propose or execute response actions with defined guardrails, and escalate higher-stakes decisions for human authorization. Full autonomous TDIR remains aspirational for most environments.

Why the Distinction Matters for Cyber Defense

The rise of agentic AI is not limited to defenders. Threat actors are already leveraging agentic frameworks to automate the reconnaissance and exploitation phases of an attack.

Countering Agentic Attacks

Agentic attack tools can perform thousands of permutations of an exploit in seconds, searching for the one path that bypasses a specific firewall configuration. To counter an agentic attacker, defenders must move beyond manual intervention. A human-speed response cannot win against a machine-speed attack.

Managing Identity and Agent Hijacking

As agentic systems gain more autonomy, they also gain more permissions. This creates a new attack surface known as agent hijacking. If an attacker compromises the credentials an agentic system uses to authenticate its tools (API keys, OAuth tokens, service account credentials), they gain an autonomous "insider" capable of moving laterally and exfiltrating data without further external commands. Securing the identity and "thinking process" of agentic AI is a critical new pillar of enterprise security.

Prompt Injection and Manipulation

Agentic systems that process external data, whether web content, emails, documents, or tool outputs, are vulnerable to prompt injection. An attacker embeds malicious instructions in content the agent will read, causing it to take unintended actions using its own legitimate permissions. Unlike credential theft, no authentication boundary is crossed; the agent follows the injected instructions as if they came from its operator. This makes prompt injection one of the most immediate security concerns for agentic deployments.

Use Cases & Real-World Examples

Unit 42 research highlights the double-edged nature of this technology. Threat actors now use agentic AI to accelerate attack lifecycles.

- Autonomous Attack Chains: Unit 42 developed an Agentic AI Attack Framework demonstrating that AI can compress a ransomware attack from initial compromise to exfiltration into just 25 minutes.

- Adaptive Phishing: Attackers use agents to scrape LinkedIn data and generate hyper-realistic lures. These agents "self-prompt" to try alternative social engineering channels if the first attempt fails.

- Identity Exploitation: Unit 42's 2026 Incident Response Report indicates that identity weaknesses are implicated in nearly 90% of investigations. Agentic AI can bridge human and machine identities to act independently across cloud and SaaS environments.

Implementing an Agentic Security Strategy

Moving toward agentic AI requires more than just new software; it requires a fundamental shift in how security teams trust and govern their tools.

Transitioning from Narrow Agents to Orchestrated Systems

- Consolidate or Federate Data Access: Agentic AI needs reliable, queryable access to the data required for reasoning. This can be achieved through unified data platforms, federated access layers, or tool-based retrieval from existing systems.

- Enable Tool Access: For an orchestrator to be effective, it must have secure access to the tools and data it needs, from EDR to cloud security posture management (CSPM). Emerging standards like Model Context Protocol (MCP) provide a consistent interface for agent tool access, though any integration path requires strong authentication and least-privilege scoping.

- Define Clear Objectives: Shift from writing "scripts" to defining "intents." Instead of telling the AI how to block an IP, tell it to ensure no traffic leaves the country from this segment.

Establishing Guardrails and Human-in-the-Loop Governance

Autonomy does not mean a lack of control. Effective agentic deployment utilizes "constrained autonomy." This involves setting hard boundaries that the AI cannot cross, such as shutting down a production database, without explicit human authorization. This ensures that while the system moves fast, it stays aligned with business continuity requirements.

Agentic AI Best Practices

Securing autonomous systems requires shifting focus from model outputs to the entire execution workflow.